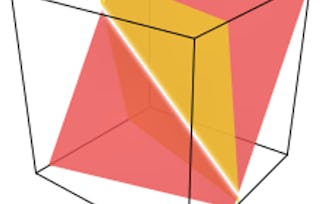

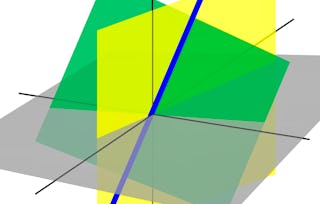

This is the third and final course in the Linear Algebra Specialization that focuses on the theory and computations that arise from working with orthogonal vectors. This includes the study of orthogonal transformation, orthogonal bases, and orthogonal transformations. The course culminates in the theory of symmetric matrices, linking the algebraic properties with their corresponding geometric equivalences. These matrices arise more often in applications than any other class of matrices.

Linear Algebra: Orthogonality and Diagonalization

顶尖授课教师

访问权限由 Coursera Learning Team 提供

4,106 人已注册

要了解的详细信息

可分享的证书

添加到您的领英档案

作业

11 项作业

授课语言:英语(English)

了解顶级公司的员工如何掌握热门技能

积累特定领域的专业知识

本课程是 Linear Algebra from Elementary to Advanced 专项课程 专项课程的一部分

在注册此课程时,您还会同时注册此专项课程。

- 向行业专家学习新概念

- 获得对主题或工具的基础理解

- 通过实践项目培养工作相关技能

- 获得可共享的职业证书

该课程共有4个模块

获得职业证书

将此证书添加到您的 LinkedIn 个人资料、简历或履历中。在社交媒体和绩效考核中分享。

位教师

授课教师评分

(12个评价)

人们为什么选择 Coursera 来帮助自己实现职业发展

Felipe M.

自 2018开始学习的学生

''能够按照自己的速度和节奏学习课程是一次很棒的经历。只要符合自己的时间表和心情,我就可以学习。'

Jennifer J.

自 2020开始学习的学生

''我直接将从课程中学到的概念和技能应用到一个令人兴奋的新工作项目中。'

Larry W.

自 2021开始学习的学生

''如果我的大学不提供我需要的主题课程,Coursera 便是最好的去处之一。'

Chaitanya A.

''学习不仅仅是在工作中做的更好:它远不止于此。Coursera 让我无限制地学习。'

学生评论

- 5 stars

91.83%

- 4 stars

6.12%

- 3 stars

2.04%

- 2 stars

0%

- 1 star

0%

显示 3/49 个

MD

已于 Nov 4, 2024审阅

It is great, the guy on the videos knows a lot, its a pity he writes so fast :))

HK

已于 Dec 8, 2024审阅

Teach good. It explore some of my blind areas about diagonalization, eigen and orthogonal, repeated roots concern, etc.

CC

已于 Mar 30, 2025审阅

Well taught, clearly explained, thorough and helpful examples throughout

从 Data Science 浏览更多内容

Johns Hopkins University

Johns Hopkins University

Johns Hopkins University

University of Minnesota